One of the lasting outcomes of our Total Recal ‘rapid innovation’ project in 2010, was that Alex Bilbie wrote the first (and only) OAuth 2.0 server for the CodeIgniter PHP development framework that we use. Since then, he’s been refining it and with every new project, we’ve been using it as part of our API-driven approach to development. As far as we know, the use of the OAuth 2.0 specification, which should be finalised at a forthcoming IETF meeting, is not yet being used by any other university in the UK. There are a few examples of OAuth revision A in use, but OAuth 2.0 is a major revision currently in its 23rd draft.

As a result of his work, Alex was invited to talk about OAuth 2.0 at Eduserv’s Federated Access Management conference last year.

Nick Jackson gave the same presentation at the Dev8D conference a couple of weeks ago.

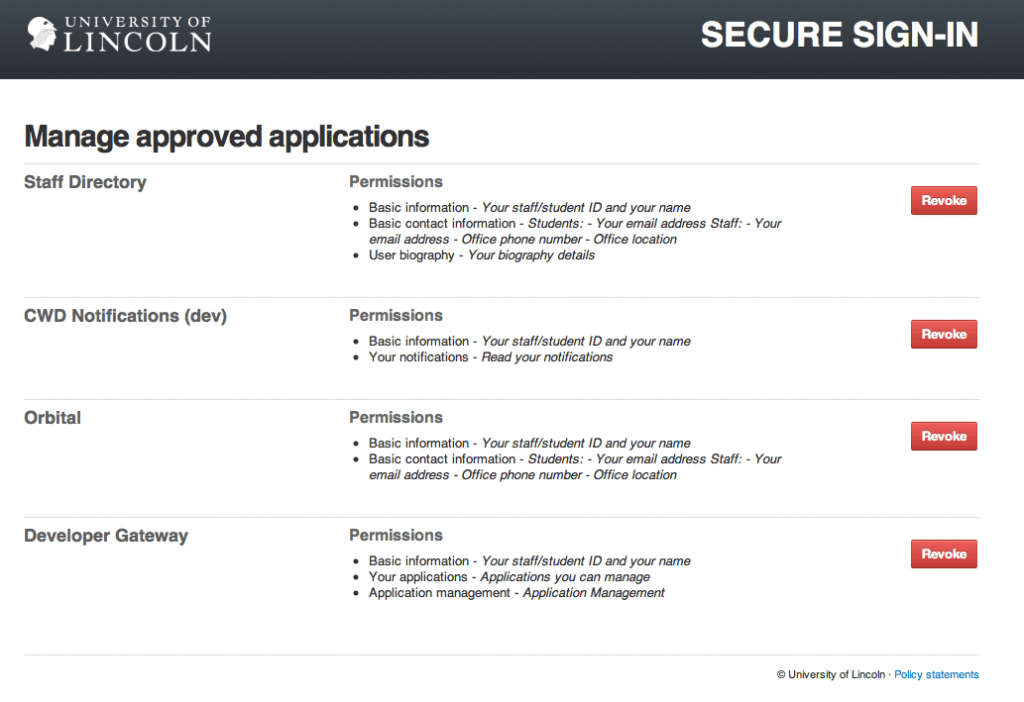

Since Total Recal, we’ve used OAuth 2.0 for Jerome, data.lincoln.ac.uk, Zendesk, Get Satisfaction, and more recently Orbital and now ON Course. We’re at the stage where our ‘single sign on’ domain https://sso.lincoln.ac.uk is the gateway to our OAuth 2.0 implementation and it will soon be running on two servers for redundancy. In short, due to various JISC projects helping pave the way, it has been formally adopted by central ICT Services, and staff and students are gradually being given control over what services their identity is bound to and what permissions those services have.

The work Nick is doing on the Orbital project is extending Alex’s OAuth 2.0 server to include some of the optional parts of the specification which we’ve not been using at Lincoln, such as refresh tokens and using HTTP Authentication with the client credentials flow. This means that the server is able to drop straight in to a wider range of projects and services.

Recently, JISC published a call for project proposal around Access and Identity Management (AIM), which I am starting to write a bid for. Appendix E1 states:

JISC is particularly interested in seeing innovative and new uses for OAuth. Bids should show how this technology brings benefits to the community and can help address institutional requirements within research, teaching and learning, work based learning, administration and Business Community Engagement.

In Total Recal, we released version 1 of the server code but have learned a lot since that project through integrating OAuth with other services. Version 2 of our OAuth server is more representative of our current implementation and fully implements the latest draft (23) of the specification.

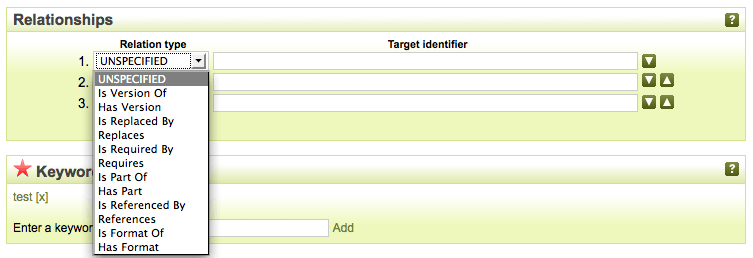

However, this is what access and identity management currently looks like:

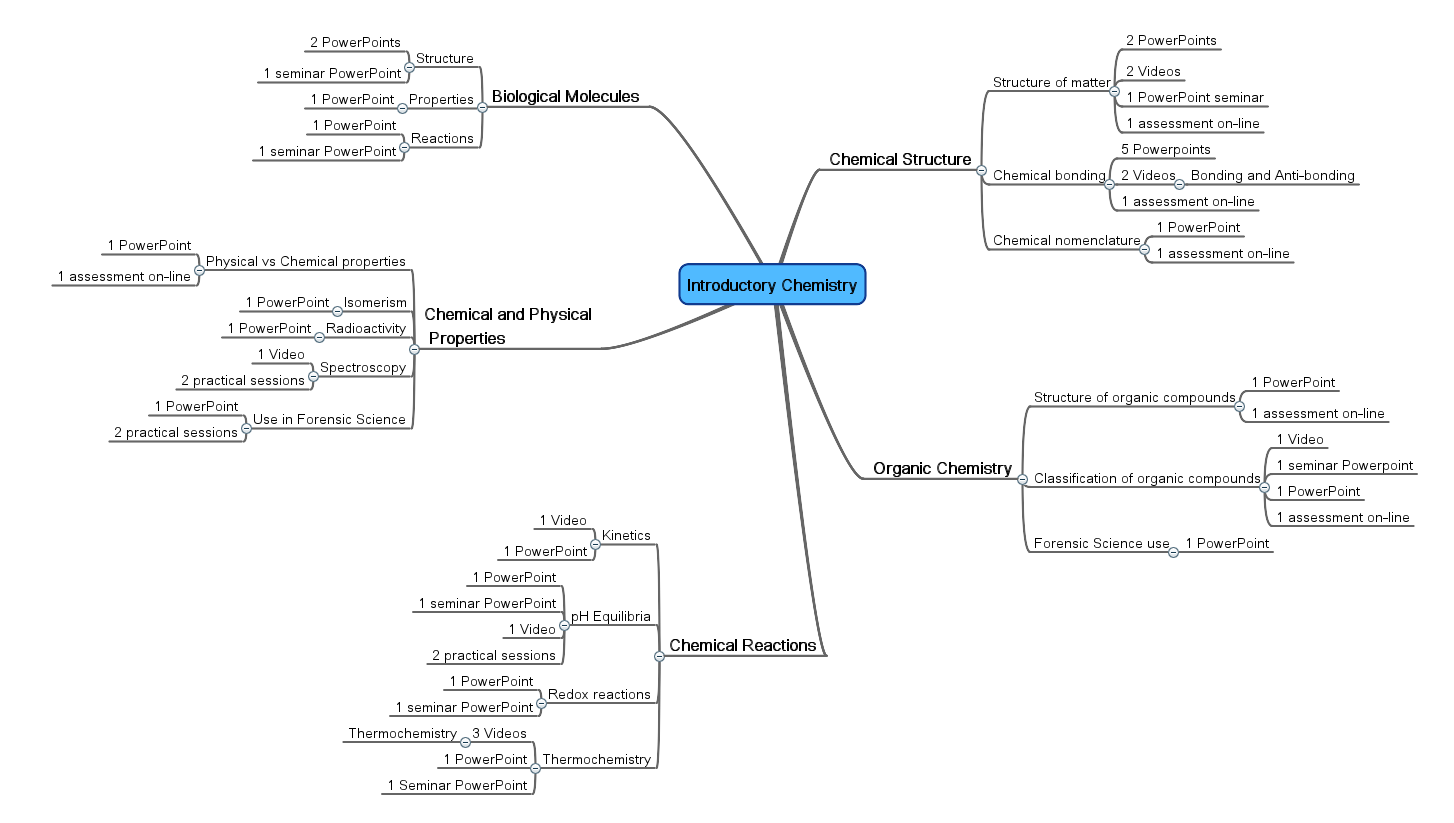

At the moment, the most widespread use of the OAuth server is Zendesk, our ICT and Estates online support service. Projects such as Jerome, Orbital, and ON Course, as well as three 3rd year Computer Science student dissertation projects are using it, too. The plan is to use OAuth alongside Microsoft’s Unified Access Gateway (UAG), which can talk SAML to OAuth via the OAuth SAML 2.0 specification. Here’s what we intend to do:

The primary driver for this is the ‘student experience’ and it cuts three ways:

- Richer sharing of data between applications: A student or lecturer should be able to identity themselves to multiple applications and approve access to the sharing of personal data between those applications.

- A consistent user experience: What we’re aiming for at first is not strictly ‘single sign on’, but rather ‘consistent sign on’, where the user is presented with a consistent UX when signing into disparate applications.

- Rapid deployment: New applications that we develop or purchase should be easier to implement, plugging into either OAuth or the UAG and immediately benefiting from 1) and 2).

Following a recent meeting between ICT and the Library, we agreed to take the following steps:

- All library (and ICT) applications that we operate internally must have Active Directory sign-in instead of local databases. Almost all of our applications achieve this already. This is the first step towards step (3).

- All web-based applications must offer a consistent looking sign-in screen based on the sso.lincoln.ac.uk design (which uses the Common Web Design). This is the second step towards (3).

- All systems must implement web-based single sign on via OAuth, SAML or ADFS and they will be sent to either UAG or the OAuth/SAML server.

The library are going to investigate to what extent we can do (2) with their applications such as Horizon and EPrints, and from then on, systems that are purchased or updated must do (3). It also makes sense to look at EPrints and WordPress in the short-term as applications that can use OAuth.

Two of the outputs we’ll propose to JISC are a case study of this work, as well as further development of the open source server Alex and Nick have been developing including an implementation of the OAuth SAML specification that we’ll share. Like our related work on staff profiles, the need to get access and identity right is becoming increasingly apparent as staff and students become accustomed to the way access and identity works elsewhere on the web. For Lincoln, a combination of OAuth and UAG is the preferred route to achieving consistent sign on across all applications, bridging both the internally facing business applications managed by ICT (e.g. Sharepoint, Exchange, Blackboard) and the more outward facing academic and social applications such as those developed and run by the Library and the Centre for Educational Research and Development.